5 main differences between ETL and ELT

28 September 2021

Do ETL and ELT sound familiar to you? The below article is dedicated to the topic of differences between them.

ETL (Extract-Transform-Load) and ELT (Extract-Load-Transform) are two approaches to the data transfer process to:

- data warehouses (storing data from multiple sources, already processed and prepared for analysis or reporting)

- data repositories (Data Lake, used to store large amounts of raw data).

Both of these methods are therefore used to migrate data from sources to storage, as well as to process them. Despite the fact that they pursue the same goals, there are some differences between them. In both of these methods, the implementation scheme of the entire process is different. They differ from each other not only in the order in which they are performed. For example, ETL is mainly used in data warehouses where the target data is structured and has a specific schema. In contrast, ELT is a more modern approach where data is usually stored in a data repository, not necessarily structured.

Which approach is more effective? The main differences between an ETL and an ELT, their pros and cons will be discussed below.

ETL Details

ETL is a tool that is used to acquire, transfer, and process data from sources to databases – most often to a data warehouse. The collected data is then used for advanced analyzes and reporting.

The ETL process consists of 3 steps: Extract, Transform and Load:

- Extract – this is the stage of identifying data sources. It extracts data from distributed sources and then loads it into the data warehouse to the Staging layer, where the raw data is stored.

- Transform – in this step, appropriate processing of the data structure takes place in terms of the destination of their storage – validation, filtering, data cleaning, etc. At this stage, it is also possible to implement business rules.

- Load – is the moment of loading previously prepared and processed data to the data warehouse.

The ETL process requires prior determination of the data schema at the target and having a staging server/space. Additionally, in the ETL method, it is necessary to use dedicated tools for creating ETL – for example SSIS (Microsoft SQL Server Integration Services). This is related to higher costs of, for example, licenses.

ELT details

ELT, like ETL, is a tool for obtaining, transferring, and processing data from sources. The main difference between the two processes is that in the ELT approach, data migrated to a data warehouse or Data Lake is stored in the form of raw data. The processing process, therefore, takes place only when the data is taken for analysis or reporting.

In the case of an ELT, the sequence of actions is different from that of the ETL. After extracting data from the sources, the data is immediately loaded to the data warehouse or to the Data Lake (bypassing the Staging layer), where the data is then stored and processed in one place.

The decisive advantage of the ELT concept is that with this approach, we do not need to plan the target data schema in advance. Raw data can be stored e.g. in a Data Lake in a structured and unstructured form.

ELT is more commonly used in No SQL databases where the data is not linked by relationships. It is worth noting that in the case of ELT, we do not have to incur additional expenses related to the Staging server and specialized ETL tools.

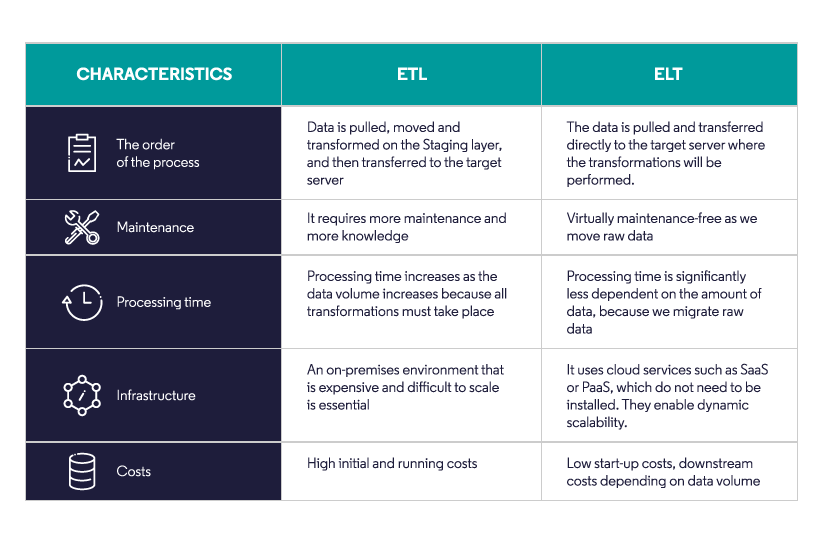

ETL vs ELT main differences

ETL is used mainly in classic data warehouses, where the purposes of data use and the structure in which they are to exist are known. On the other hand, ELT can only be used for data migration, without processing it – and is most often used in data repositories where it is not necessary to plan the structure/scheme of data storage.

Recommendations for end-users and projects

ETL:

- classic approach, known for many years

- better for long term and extensive projects

- requires specialized tools and knowledge

- more costly and time-consuming

- requires specifying the schema of the target data

- structured data and most often connected by relationships are processed

ELT:

- a more modern approach

- better for short-term/ad-hoc projects where the stored data does not need to be structured

- processing of raw data for specific analysis tools (e.g. PowerBI)

- faster and easier data transfer

- does not require specialized tools

- you need a more efficient target server to be able to process large amounts of data

Best practices

In the last few years, I have had the pleasure of using both the ETL and ELT approaches in my projects.

I have recently used the ETL method in a project for the FMCG industry. The data uploaded to the target server was previously transformed for a specific analysis, e.g. in terms of a multidimensional model. The advantage of the classic approach was good structuring and preparation of data using ETL tools. Another advantage was that it was easier to develop or extend the solution – we had raw data and post-transformation data at our disposal. Due to the size of the project, the ELT approach would probably not work.

I used the ELT approach in a project for the energy industry. All data was loaded on the target server as raw data, and then transformed for the multidimensional model and Power BI reports. A big advantage for me was the much faster data loading process than in the case of ETL. Hence, further transformations were easier to perform as all data was already collected on one server. However, I must point out that the project was not as large as the one for the FMCG industry (described above in the case of ETL).

As you can see, depending on the size or characteristics of the project, either approach may be a more appropriate choice. So it’s best to choose a method based on what your specific design requirements will be.